I'm leaving AI alignment – you better stay

This was originally published on LessWrong on 12 March 2020. It's my most popular article there. I'm republishing it here because it might be easier to find and because I think it's generally useful, not just for AI alignment researchers. Original: LessWrong: I'm leaving AI alignment – you better stay I suggest reading the comments there.

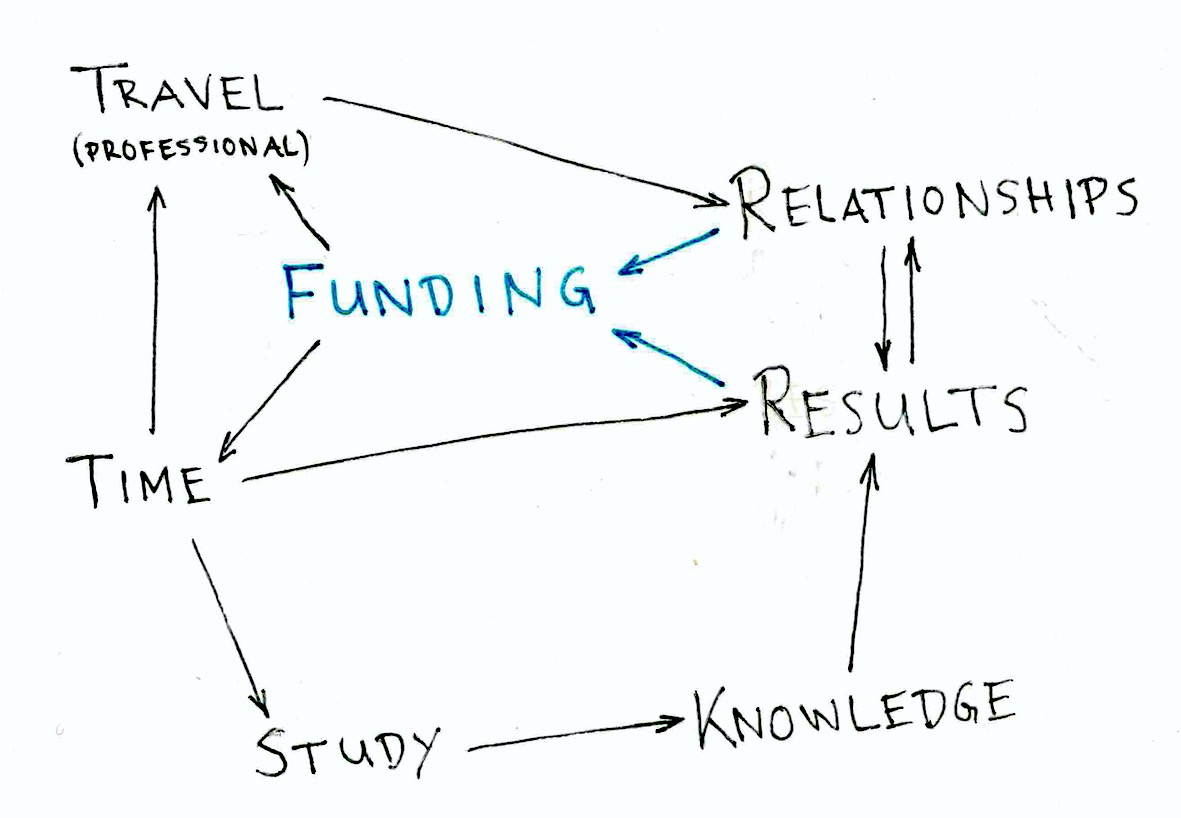

This diagram summarizes the requirements for independent AI alignment research and how they are connected.

In this post I'll outline my four-year-long attempt at becoming an AI alignment researcher. It's an ‘I did X [including what I did wrong], and here's how it went’ post (see also jefftk's More writeups!). I'm not complaining about how people treated me – they treated me well. And I'm not trying to convince you to abandon AI alignment research – you shouldn't. I'm not saying that anyone should have done anything differently – except myself.

Requirements

Funding

Funding is the main requirement, because it enables everything else. Thanks to Paul Christiano I had funding for nine months between January 2019 and January 2020. Thereafter I applied to the EA Foundation Fund (now Center on Long-Term Risk Fund) and Long-Term Future Fund for a grant and they rejected my applications. Now I don't know of any other promising sources of funding. I also don't know of any AI alignment research organisation that would hire me as a remote worker.

How much funding you need varies. I settled on 5 kUSD per month, which sounds like a lot when you're a student, and which sounds like not a lot when you look at market rates for software developers/ML engineers/ML researchers. On top of that, I'm essentially a freelancer who has to pay social insurance by himself, take time off to do accounting and taxes, and build runway for dry periods.

Results and relationships

In any job you must get results and build relationships. If you don't, you don't earn your pay. (Manager Tools talks about results and relationships all the time. See for example What You've Been Taught About Management is Wrong or First Job Fundamentals.)

The results I generated weren't obviously good enough to compel Paul to continue to fund me. And I didn't build good enough relationships with people who could have convinced the LTFF and EAFF fund managers that I have the potential they're looking for.

Time

Funding buys time, which I used for study and research.

Another aspect of time is how effectively and efficiently you use it. I'm good at effective, not so good at efficient. – I spend much time on non-research, mostly studying Japanese and doing sports. And dawdling. I noticed the dawdling problem at the end of last year and got it under control at the beginning of this year (see my time tracking). Too late.

Added 2020-03-16: I also need a lot of sleep in order to do this kind of work. – About 8.5 h per day.

Travel and location

I live in Kagoshima City in southern Japan, which is far away from the AI alignment research hubs. This means that I don't naturally meet AI alignment researchers and build relationships with them. I could have compensated for this by travelling to summer schools, conferences etc. But I missed the best opportunities and I felt that I didn't have the time and money to take the second-best opportunities. Of course, I could also relocate to one of the research hubs. But I don't want to do that for family reasons.

I did start maintaining the Predicted AI alignment event/meeting calendar in order to avoid missing opportunities again. And I did apply and get accepted to the AI Safety Camp Toronto 2020. They even chose my research proposal for one of the teams. But I failed to procure the funding that would have supported me from March through May when the camp takes place.

Knowledge

I know more than most young AI alignment researchers about how to make good software, how to write well and how to work professionally. I know less than most young AI alignment researchers about maths, ML and how to do research. The latter appear to be more important for getting results in this field.

Study

Why do I know less about maths, ML and how to do research? Because my formal education goes only as far as a BSc in computer science, which I finished in 2014 (albeit with very good grades). There's a big gap between what I remember from that and what an MSc or PhD graduate knows. I tried to make up for it with months (time bought with Paul's funding) of self-study, but it wasn't enough.

Added 2020-03-26: Probably my biggest strategic mistake was to focus on producing results and trying to get hired from the beginning. If I had spent 2016–2018 studying ML basics, I would have been much quicker to produce results in 2019/2020 and convince Paul or the LTFF to continue funding me.

Added 2020-12-09: Perhaps trying to produce results by doing projects is fine. But then I should have done projects in one area and not jumped around the way I did. This way I would have built experience upon experience, rather than starting from scratch everytime. (2021-05-25: I would also have continued to build relationships with researchers in that one area.) Also, it might have been better to focus on the area that I was already interested in – type systems and programming language theory – rather than the area that seemed most relevant to AI alignment – machine learning.

Another angle on this, in terms of Jim Collins (see Jim Collins — A Rare Interview with a Reclusive Polymath (#361)): I'm not ‘encoded’ for reading research articles and working on theory. I am probably ‘encoded’ for software development and management. I'm skeptical, however, about this concept of being ‘encoded’ for something.

All for nothing?

No. I built relationships and learned much that will help me be more useful in the future. The only thing I'm worried about is that I will forget what I've learned about ML for the third time.

Conclusion

I could go back to working for money part-time, patch the gaps in my knowledge, results and relationships, and get back on the path of AI alignment research. But I don't feel like it. I spent four years doing ‘what I should do’ and was ultimately unsuccessful. Now I'll try and do what is fun, and see if it goes better.

What is fun for me? Software/ML development, operations and, probably, management. I'm going to find a job or contracting work in that direction. Ideally I would work directly on mitigating x-risk, but this is difficult, given that I want to work remotely. So it's either earning to give, or building an income stream that can support me while doing direct work. The latter can be through saving money and retiring early, or through building a ‘lifestyle business’ the Tim Ferriss way.

Another thought on fun: When I develop software, I know when it works and when it doesn't work. This is satisfying. Doing research always leaves me full of doubt whether what I'm doing is useful. I could fix this by gathering more feedback. For this again I would need to buy time and build relationships.

Timeline

For reference I'll list what I've done in the area of AI alignment. Feel free to stop reading here if you're not interested.

04/2016–03/2017 Research student at Kagoshima University. Experimented with interrupting reinforcement learners and wrote Questions on the (Non-)Interruptibility of Sarsa(λ) and Q-learning. Spent a lot of time trying to get a job or internship at an AI alignment research organization.

04/2017–12/2017 Started working part-time to support myself. Began implementing point-based value iteration (GitHub: rmoehn/piglet_pbvi) in order to compel BERI to hire me.

12/2017–04/2018 Helped some people earn a lot of money for EA.

05/2018–12/2018 Still working part-time. Applied at Ought. Didn't get hired, but implemented improvements to their implementation of an HCH-like program (GitHub: rmoehn/patchwork).

12/2018–02/2019 Left my part-time job. Funded by Paul Christiano, implemented an HCH-like program with reflection and time travel (GitHub: rmoehn/jursey).

06/2019–02/2020 Read sequences on the AI alignment forum, came up with Twenty-three AI alignment research project definitions, chose ‘IDA with RL and overseer failures’ (GitHub: rmoehn/farlamp), wrote analyses, studied ML basics for the third time in my life, adapted code (GitHub: rmoehn/amplification) and ran experiments.

08/2019–06/2020 Maintained the Predicted AI alignment event/meeting calendar.

10/2019–sometime in 2020 Organized the Remote AI Alignment Writing Group (see also Remote AI alignment writing group seeking new members).

01/2020–03/2020 Applied, got admitted to and started contributing to the AISC Toronto 2020.

Added 2021-05-25: 10/2020–01/2021 Coordinated the application process for AI Safety Camp #5.

Thanks

…to everyone who helped me and treated me kindly over the past four years. This encompasses just about everyone I've interacted with. Those who helped me most I've already thanked in other places. If you feel I haven't given you the appreciation you deserve, please let me know and I'll make up for it.